Abstract

After measuring a 292x cost gap between a rented B200 and frontier API providers on a batch inference workload, the logical next question was whether the same pattern held for operational intelligence: could a smaller, dedicated model handle the continuous judgment calls required to run a GPU fleet? The batch inference test had exposed KV cache contention as the dominant bottleneck on shared API infrastructure. Processing similar structured data at scale, but this time continuously rather than in batch, seemed like a valid test of whether that contention would degrade operational quality the same way it degraded throughput.

The question became concrete in my own lab. Running four RTX 3090s with NVLink, I started hitting “GPU has fallen off the bus” errors under sustained load. Ampere hardware, proper interconnects, and it still failed under pressure. That got me thinking about how a neo-cloud operator would handle thousands of GPUs generating telemetry at 15-second intervals, each one capable of the same thermal and interconnect failures I was seeing at a fraction of the scale.

I stood up a Prometheus stack in the lab and started collecting real telemetry, splitting what I could simulate across three workload profiles: dense model serving, MoE model serving, and a training batch. Some failure scenarios I could reproduce directly. Others, like HBM degradation patterns and InfiniBand fabric congestion, I sourced from published incident reports and vendor documentation. I have dealt with RDMA congestion in production environments, but reproducing fabric microbursts requires saturating switch buffers with all-to-all traffic patterns. You cannot generate meaningful PFC signaling pressure with four GPUs across two nodes. The physics of the failure mode demands fleet scale.

From that combination of real lab telemetry and research-grounded failure injection, I built a synthetic cluster simulation and tested seven LLM backends as autonomous operators, scored against deterministic checklists across six failure scenarios.

The key finding: model cost and parameter count do not predict operational accuracy. GPT-5.4 ($32.29/day) achieved the lowest root cause accuracy (40.8%) and worst shift summary quality (27.2%). Qwen 3.5 397B ($2.36/day) achieved the highest root cause accuracy (61.9%) and best shift summaries (80.7%). A free local Gemma 4 MoE (26B) matched frontier model triage accuracy at 4.6x lower latency.

Simulated Environment

Cluster Topology

The benchmark simulates a neo-cloud GPU cluster running mixed workloads continuously. This models the operational profile of infrastructure providers like CoreWeave or Lambda, or a hyperscaler’s dedicated AI division.

| Dimension | Value |

|---|---|

| Compute nodes | 1,000 |

| GPUs per node | 8x NVIDIA H100 SXM5 (80 GB HBM3) |

| Total GPUs | 8,000 |

| Racks | 25 (40 nodes per rack) |

| Intra-node interconnect | NVLink 4.0 (900 GB/s per GPU) |

| Inter-node interconnect | 400 Gbps InfiniBand NDR |

| Cooling | Computer Room Air Handler (CRAH) units, one per rack row |

Nodes within the same rack share cooling infrastructure, PDU circuits, and leaf switches on the InfiniBand fabric. Physical co-location drives correlated failures: a cooling unit failure affects an entire rack, not a random scatter of nodes.

Workload Tenancy

The cluster is partitioned into three tenant types with distinct operational characteristics:

| Tenant | Allocation | Nodes | GPUs | Behavior |

|---|---|---|---|---|

| MoE inference | 30% | 300 | 2,400 | Mixture-of-Experts models serving API requests. Diurnal traffic (peak 14:00, trough 02:00). Expert-parallel all-to-all communication. |

| Dense inference | 35% | 350 | 2,800 | Dense transformer models serving API requests. Same diurnal pattern, more predictable compute. KV cache management is the critical bottleneck. |

| Training | 35% | 350 | 2,800 | Distributed training jobs running 24/7. Flat power/compute with periodic checkpoint spikes. All-reduce collectives require node synchronization at every step. |

Training Jobs

The 350 training nodes run 5 concurrent jobs:

| Job ID | Model | Nodes | Baseline Step Time | Communication |

|---|---|---|---|---|

| train-000 | llama-70b-pretrain | 64 | 2.56s | All-reduce (64 nodes) |

| train-001 | mistral-22b-finetune | 32 | 3.01s | All-reduce (32 nodes) |

| train-002 | internal-moe-140b-pretrain | 32 | 3.25s | All-reduce + expert routing |

| train-003 | llama-70b-pretrain | 64 | 2.38s | All-reduce (64 nodes) |

| train-004 | mistral-22b-finetune | 128 | 2.70s | All-reduce (128 nodes) |

A critical property of distributed training: all nodes in a job synchronize at every step. If one node is slow (a “straggler”), every other node waits at the sync barrier. A single degraded node in a 128-node job slows all 128 nodes to the speed of the slowest one.

Telemetry

The simulation models a Prometheus-compatible monitoring stack. Every 15 seconds, each node’s metrics exporter publishes GPU-level, node-level, and cluster-level metrics.

Over 24 hours, this produces 5,760 scrape intervals with approximately 30 metrics per GPU across 8 GPUs (240 data points per node per scrape). For the nodes tracked at full resolution: 4.1 million GPU-level data points and 518,000 node-level data points.

GPU-level metrics include power draw (W), junction temperature (C), SM utilization (%), ECC single-bit and double-bit error counts, memory bandwidth utilization (%), and NVLink replay count. Inference nodes additionally report TTFT (ms), ITL (ms), KV cache hit ratio, tokens per second, and (for MoE) expert selection entropy. Training nodes report step time (ms), checkpoint write duration (s), all-reduce wait time (ms), reserved memory (bytes), and gradient norm.

Node-level metrics include inlet temperature (C), PDU phase imbalance (%), RDMA traffic (bytes/s), InfiniBand port receive errors, and NCCL all-reduce latency (us).

Cluster-level metrics include facility PUE, spare GPU capacity, and tenant SLA budget remaining.

Telemetry includes realistic seasonal patterns: inference traffic follows a diurnal sine curve; training workloads are flat with periodic checkpoint spikes; facility PUE tracks outdoor temperature; SLA budget decreases monotonically as error budget is consumed.

Anomaly Scenarios

Six scenarios are injected at predetermined timestamps across the 24-hour window. They are ordered by diagnostic complexity, from single-metric deviations to cross-tenant causal chains requiring multi-hop reasoning.

Hour 0 6 10 14 18 21 24

|-------|------------|------|-----|----------|-----|----------|

S1 S5 S2 S4 S6

S3 (slow, 6h duration)

S1: Thermal Cascade (Hour 6, 30 minutes)

A CRAH cooling unit fails. Inlet temperatures on 20 nodes across 2 racks rise by 8-12C over 8 minutes. GPU junction temperatures follow. Training nodes hit thermal throttle (83C) first because they operate at a higher thermal baseline (72-78C vs 55-68C for inference), with only 5-8C of headroom compared to inference nodes’ 15-21C. Training step times degrade before any inference latency impact. The LLM must recognize this cross-tenant asymmetry.

L3 ground truth checklist (8 items): identify temperature anomaly, scope to rack/multi-node, mention CRAH/cooling failure, note training affected first due to thermal baseline, correlate inlet temp with workload degradation, distinguish training/inference impact timeline, recommend migration or drain, flag checkpoint before drain.

S2: MoE Expert Routing Collapse (Hour 14, 45 minutes)

Traffic pattern shift causes 60% of tokens to route to 2 of 8 experts on MoE inference nodes. Expert selection entropy drops from 2.0 to 0.8 nats (maximum for 8 experts: ln(8) = 2.08). Two GPUs spike to 95% utilization while six idle at 20%. Per-GPU metrics individually look fine. Aggregate throughput drops 35%.

L3 ground truth checklist (7 items): identify GPU utilization imbalance across experts, find entropy drop as root signal, recognize individual GPUs look fine, identify aggregate throughput drop, link to traffic pattern shift, recommend routing rebalance, note SLA impact.

S3: Silent HBM Degradation (Hour 3, 6 hours)

Single-bit error rate on 12 GPUs from the same silicon lot trends upward exponentially over 6 hours. 8 GPUs in training nodes, 4 in inference. No performance impact yet. This tests preemptive detection: the LLM should recommend drain-before-failure, not wait for an uncorrectable error.

L3 ground truth checklist (7 items): identify SBE rate trend, note correlated failure pattern (silicon lot), recognize no performance impact yet, note cross-tenant scope, recommend preemptive drain, recommend checkpoint-and-migrate for training, flag for RMA.

S4: Fabric Microbursts (Hour 18, 30 minutes)

MoE expert-parallel all-to-all traffic creates 50-microsecond bursts overflowing switch buffers on 2 racks. RDMA retransmits cause 2-3ms tail latency on inference. Simultaneously causes all-reduce jitter on training jobs sharing those leaf switches. The LLM must identify cross-tenant fabric contention: the same root cause manifests differently per tenant type.

L3 ground truth checklist (6 items): identify RDMA/fabric issue, find IB port errors as signal, identify MoE traffic as source, identify cross-tenant impact, note different impact mechanisms per tenant, recommend traffic isolation or QoS.

S5: KV Cache Eviction Storm (Hour 10, 60 minutes)

Dense inference request pattern shifts to longer contexts. KV cache hit ratio drops from 0.94 to 0.45. TTFT doubles. ITL stays fine, ruling out compute bottleneck. The LLM should identify cache undersizing, not GPU saturation.

L3 ground truth checklist (6 items): identify TTFT doubling, find KV cache hit ratio drop, note ITL unaffected, link to context length shift, recommend cache resize, note SLA impact.

S6: Training Straggler Cascade (Hour 21, 120 minutes)

One node in a 32-node training job (train-001, mistral-22b-finetune) develops intermittent NVLink CRC errors from thermal expansion on the SXM5 baseboard. Its all-reduce contribution slows. All 31 other nodes wait at the sync barrier. Training step time degrades from 3.01s to approximately 4.0s across the entire job. Per-node GPU metrics (utilization, power, temperature) appear normal on every node except for NVLink replay count on the straggler (80-200 replays per interval vs baseline of 1-3).

This is the benchmark’s most challenging scenario: a classic needle-in-a-haystack requiring correlation of “entire job slowed” with “one node has elevated NVLink errors.”

L3 ground truth checklist (9 items): identify step time degradation, scope to job level, identify the straggler node, find NVLink replay count as root signal, correlate with thermal conditions, note all other nodes healthy, recommend straggler drain, recommend checkpoint before drain, note job completion timeline impact.

What Makes This Benchmark Hard

Five properties distinguish this from standard LLM evaluations:

- Cross-tenant reasoning. The same infrastructure event affects MoE inference, dense inference, and training differently. Training nodes are more thermally vulnerable. MoE traffic causes fabric congestion. Training stragglers have job-wide impact.

- Needle-in-haystack. S6 presents 32 nodes with degraded step times. Only one has the root cause. The LLM sees a job-level event and must identify the single anomalous node among 31 healthy-looking peers.

- Aggregate vs. individual reasoning. In S2, each GPU individually looks fine, but the ensemble is broken. The LLM must reason about the distribution, not each GPU in isolation.

- Preemptive detection. S3 has no performance impact. The LLM must recognize a hardware failure trend and recommend proactive action.

- Multi-hop causal chains. S1 requires tracing CRAH failure to inlet temp rise to junction temp rise to thermal throttle to training step time degradation to (later) inference latency impact.

Detection and Response Pipeline

The pipeline models a tiered AIOps system where statistical detection handles volume and LLMs handle judgment.

Layer 1: Statistical Anomaly Detection

No LLM involved. Pure statistical processing runs every 5 simulated minutes (every 20 scrape intervals). For each metric on each node, Layer 1 computes three signals:

- Z-score: standard deviations from hourly baseline. Threshold: |z| > 3.0.

- EWMA deviation: exponentially weighted moving average tracks trends.

- Rate of change: how fast the metric is moving.

Baselines are workload-aware: separate per tenant type and per hour of day. A training GPU at 95% utilization is normal; an inference GPU at 95% at 3 AM is not. For training nodes, the detector also compares against job peers: a node’s step time is measured against the other nodes in the same training job.

Deduplication: multiple anomalous GPUs on the same node collapse into one node-level event. Multiple nodes in the same training job collapse into one job-level event.

Debouncing: node-level events suppressed for 5 minutes; job-level and rack-level events for 20 minutes.

Volume cap: maximum 5 events per detection step. Over 24 hours this yields approximately 1,440 L2 calls per run.

Layer 2: LLM Triage

Each Layer 1 event is sent to the LLM with approximately 1,000 tokens of context: the triggered metric with z-score, a compressed summary of all 8 GPUs on the affected node, and node-level metrics. The LLM responds with structured JSON: classification (real_anomaly / noise / transient), scope (gpu / node / rack / job / cluster), tenant type, confidence, and rationale.

Escalation Logic

Events escalate from Layer 2 to Layer 3 based on detector-driven criteria, not the LLM’s classification:

- Job-level scope: multiple nodes in the same training job flagged

- Rack-level scope: 5+ anomalous nodes in the same rack

- High-signal infrastructure metrics with extreme z-scores:

gpu_nvlink_replay_countz > 15,ib_port_rcv_errorsz > 15,kv_cache_hit_ratioz > 10,model_expert_selection_entropyz > 10

Actual escalation rate: approximately 11% of L2 events, yielding approximately 165 L3 calls per run.

Layer 3: LLM Root Cause Analysis

Escalated events receive 3,000-5,000 tokens of context: the triggered anomaly with co-occurring anomalies, 30-minute time series before and after, rack neighbor state, tenant/job context, and recent Layer 2 decisions. The LLM responds with structured JSON: root cause, confidence, affected scope, tenant types, cross-tenant impact, causal chain, recommended actions, and priority.

Layer 4: LLM Shift Summary

Generated every 4 simulated hours (6 reports) plus one final 24-hour summary. The LLM receives approximately 3,300 tokens: cluster status, all L3 analyses compressed into one-liners, L2 event counts, SLA budget per tenant, and training job health table. Output is a structured markdown shift handoff report covering active incidents, resolved incidents, SLA status, training health, emerging risks, and next-shift recommendations.

Backends Tested

Seven LLM backends processed the identical event stream across all layers:

| Backend | Parameters | Hosting | Pricing Model |

|---|---|---|---|

| Gemma 4 26B MoE | 26B (MoE) | vLLM local (RTX 3090) | GPU electricity only |

| Gemma 4 31B Dense | 31B | vLLM local (RTX 3090) | GPU electricity only |

| Claude Sonnet 4 | Undisclosed | Anthropic API | Per-token |

| Claude Opus 4 | Undisclosed | Anthropic API | Per-token |

| Qwen 3.5 397B | 397B (MoE) | Together.ai API | Per-token |

| GPT-4o-mini | Undisclosed | OpenAI API | Per-token |

| GPT-5.4 | Undisclosed | OpenAI API | Per-token |

The two local models ran on an NVIDIA RTX 3090 (24 GB VRAM) via vLLM. All API-based backends were called through their respective platform endpoints (Anthropic, OpenAI, Together.ai), not through resale layers (Bedrock, Azure, Vertex).

All seven backends completed full 24-hour simulation runs.

Scoring Methodology

Each scenario defines a ground truth checklist: a list of facts the LLM should identify at each layer. Checklist items range from 4 (L2) to 9 (L3 for S6). Scoring uses keyword and pattern matching (case-insensitive regex) against each item. For example, “Found NVLink replay count as the root signal” matches if the response contains patterns like nvlink.*replay, nvlink.*error, nvlink.*crc, or nvlink.*count.

Score = percentage of checklist items matched per response.

This scoring is deterministic and reproducible. No LLM-as-judge, no subjective evaluation. The tradeoff is that a model could identify the correct root cause using unexpected phrasing and receive a lower score. To mitigate this, regex patterns are designed broadly (e.g., matching “nvlink” adjacent to any of several keywords rather than requiring exact phrases).

For manual qualitative review, the scorer exports judgment packages (markdown files containing scenario context, ground truth, the LLM’s full response, and a rubric) suitable for human expert or LLM-as-judge evaluation.

Results

Performance Summary

| Metric | Gemma 4 MoE | Gemma 4 Dense | Claude Sonnet 4 | Claude Opus 4 | Qwen 3.5 397B | GPT-4o-mini | GPT-5.4 |

|---|---|---|---|---|---|---|---|

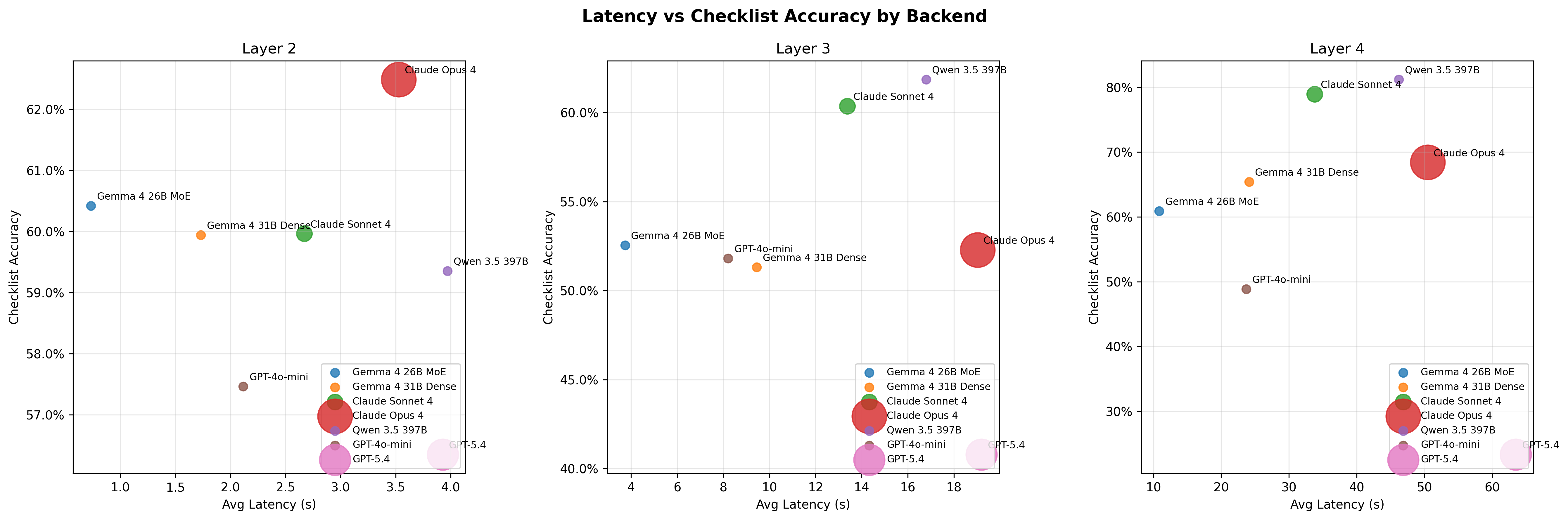

| L2 accuracy | 60.4% +/- 17.8% | 59.9% +/- 21.1% | 60.0% +/- 12.9% | 62.5% +/- 16.5% | 59.4% +/- 14.3% | 57.5% +/- 14.3% | 56.3% +/- 21.5% |

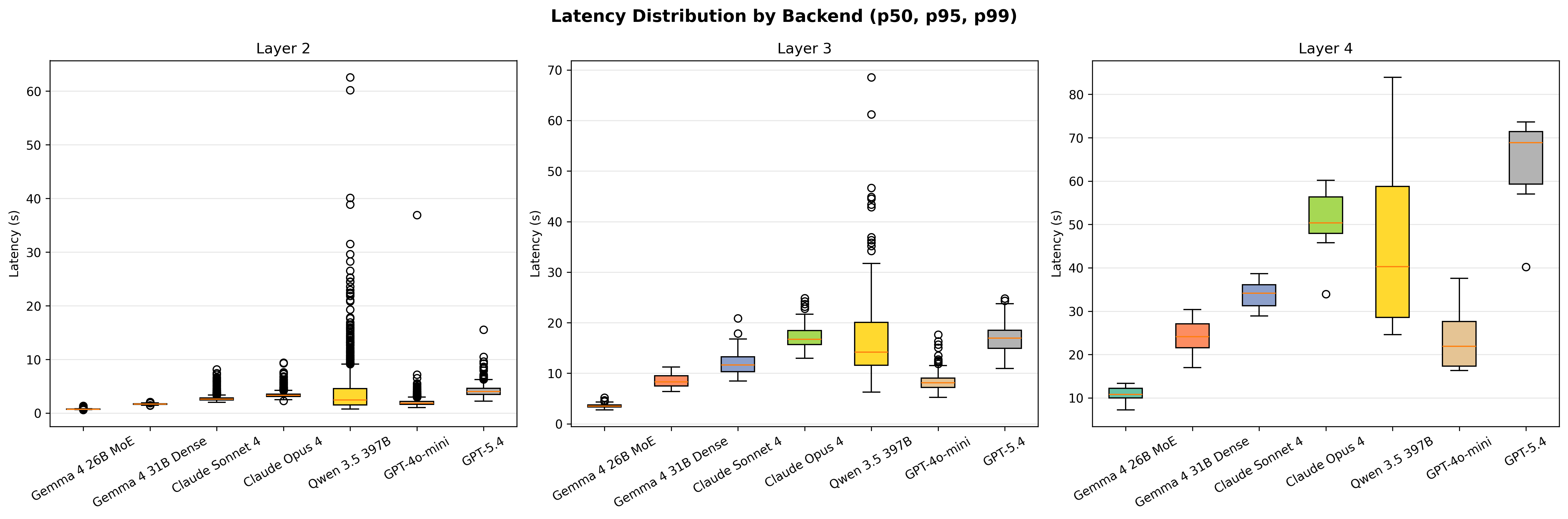

| L2 latency | 0.73s +/- 0.07s | 1.73s +/- 0.10s | 2.67s +/- 0.44s | 3.40s +/- 0.59s | 3.97s +/- 5.01s | 2.12s +/- 0.70s | 3.93s +/- 0.97s |

| L3 accuracy | 52.6% +/- 18.6% | 51.3% +/- 21.5% | 60.4% +/- 24.3% | 52.3% +/- 20.1% | 61.9% +/- 20.7% | 51.8% +/- 18.4% | 40.8% +/- 35.7% |

| L3 latency | 3.74s +/- 0.39s | 9.45s +/- 0.93s | 13.38s +/- 2.16s | 17.20s +/- 2.31s | 16.79s +/- 6.93s | 8.21s +/- 1.98s | 19.20s +/- 2.26s |

| L4 accuracy | 60.5% +/- 17.8% | 64.0% +/- 9.7% | 78.1% +/- 11.2% | 67.5% +/- 14.7% | 80.7% +/- 8.6% | 50.0% +/- 15.2% | 27.2% +/- 42.2% |

| L4 latency | 10.84s +/- 2.07s | 24.10s +/- 4.81s | 33.77s +/- 3.50s | 48.82s +/- 8.56s | 46.21s +/- 22.80s | 23.68s +/- 7.81s | 63.45s +/- 11.93s |

| Cost (24h) | $0 | $0 | $8.08 | $40.00 | $2.36 | $0.32 | $32.29 |

| GPU-hours | 0.5 | 1.1 | 0 | 0 | 0 | 0 | 0 |

Accuracy by Scenario

| Backend | S1: Thermal | S2: MoE Collapse | S3: HBM Degrad. | S4: Microbursts | S5: KV Cache | S6: Straggler |

|---|---|---|---|---|---|---|

| Gemma 4 MoE | 46% | 67% | 69% | 47% | 47% | 48% |

| Gemma 4 Dense | 39% | 81% | 65% | 40% | 53% | 46% |

| Claude Sonnet 4 | 45% | 55% | 67% | 55% | 56% | 50% |

| Claude Opus 4 | 46% | 72% | 68% | 48% | 55% | 47% |

| Qwen 3.5 397B | 52% | 64% | 67% | 49% | 50% | 54% |

| GPT-4o-mini | 44% | 58% | 67% | 42% | 48% | 46% |

| GPT-5.4 | 54% | 49% | 65% | 38% | 46% | 59% |

Latency Distribution

| Backend | L2 p50 | L2 p95 | L3 p50 | L3 p95 |

|---|---|---|---|---|

| Gemma 4 MoE | 0.72s | 0.81s | 3.51s | 4.12s |

| Gemma 4 Dense | 1.68s | 1.87s | 8.30s | 10.41s |

| Claude Sonnet 4 | 2.61s | 3.43s | 11.63s | 15.39s |

| Claude Opus 4 | 3.30s | 4.27s | 16.73s | 21.28s |

| Qwen 3.5 397B | 2.45s | 11.10s | 14.16s | 36.24s |

| GPT-4o-mini | 1.85s | 3.06s | 8.09s | 12.16s |

| GPT-5.4 | 4.02s | 5.73s | 16.94s | 21.61s |

Key Findings

Finding 1: L2 triage is a commodity. All seven backends cluster between 56.3% and 62.5% accuracy at Layer 2. The standard deviations overlap substantially. No backend demonstrates a statistically significant advantage for binary anomaly classification at the triage layer.

Finding 2: Model cost does not predict L3 accuracy. GPT-5.4 ($32.29/day) achieved the lowest L3 accuracy at 40.8% +/- 35.7%. Qwen 3.5 397B ($2.36/day) achieved the highest at 61.9% +/- 20.7%. The 13.6x cost difference produced an inverted accuracy ranking.

Finding 3: L4 shift summaries expose the largest quality gap. The spread from worst (GPT-5.4 at 27.2%) to best (Qwen 3.5 at 80.7%) is 53.5 percentage points. This is the layer where synthesis, prioritization, and cross-incident reasoning matter most. GPT-5.4’s high variance (+/- 42.2%) suggests inconsistent reasoning at this complexity level.

Finding 4: Local MoE achieves sub-second triage. Gemma 4 MoE at 0.73s +/- 0.07s L2 latency is 2.8x faster than the next-fastest API backend (GPT-4o-mini at 2.12s) and 5.4x faster than GPT-5.4 at 3.93s. The p95 latency (0.81s) remains sub-second with minimal variance, compared to Qwen’s p95 of 11.10s (a 4.5x blowup from its p50 of 2.45s).

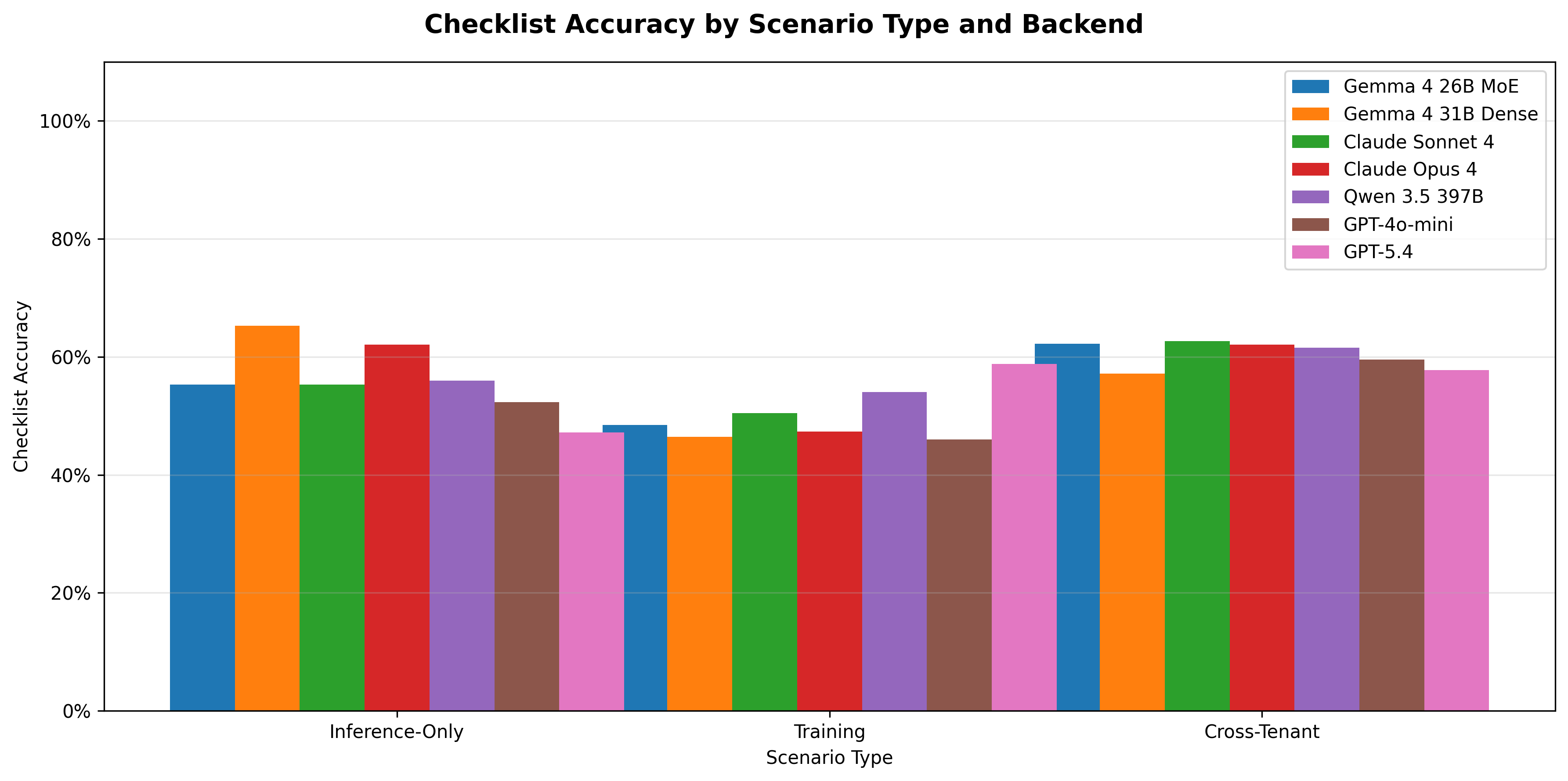

Finding 5: Scenario difficulty varies by failure mode, not backend. Thermal cascade (S1) and fabric microbursts (S4) are consistently the hardest scenarios across all backends (39-54% and 38-55% respectively). HBM degradation (S3) is consistently the easiest (65-69%). This suggests the difficulty is in the scenario structure, not a backend-specific weakness.

Finding 6: Cross-tenant scenarios do not consistently penalize any backend. S1, S3, S4, and S6 all involve cross-tenant reasoning. No single backend systematically underperforms on cross-tenant scenarios relative to single-tenant ones.

Discussion

Model Size Is Not Operational Intelligence

The most striking result is GPT-5.4’s performance. As the most recently released and presumably most capable model in the comparison (by parameter count and benchmark scores on standard evaluations), its L3 accuracy of 40.8% and L4 accuracy of 23.3% are the worst in the field. The high variance on both metrics (+/- 35.7% and +/- 39.8%) suggests that the model’s responses are inconsistent rather than consistently wrong. On some calls it produces thorough analysis; on others it misses critical checklist items or generates tangential content.

This is consistent with the hypothesis that general-purpose reasoning capability does not transfer directly to domain-specific operational judgment. The structured JSON output format and the need to identify specific infrastructure metrics (NVLink replay counts, expert entropy values, KV cache hit ratios) may favor models with stronger instruction following over models with broader reasoning.

The L4 Cliff

Layer 4 shift summaries require synthesizing 4 hours of cluster state, multiple incidents, SLA budget trends, and training job health into a coherent handoff report. This is qualitatively different from L2 (classify one event) or L3 (analyze one incident). The 27.2% to 80.7% spread at L4, compared to 56.3% to 62.5% at L2, suggests that synthesis scales non-linearly with task complexity across model architectures.

Qwen 3.5’s strong L4 performance (80.7% +/- 8.6%) with low variance is notable given its moderate L2 performance (59.4%). This model appears to allocate more reasoning effort to complex synthesis tasks than to simple classification, the inverse of GPT-5.4’s behavior.

Latency Tail Behavior

For a production AIOps system processing events in real time, tail latency matters as much as median. Qwen 3.5 shows a 4.5x blowup from p50 to p95 at L2 (2.45s to 11.10s) and a 2.6x blowup at L3 (14.16s to 36.24s). This is consistent with Together.ai’s shared inference infrastructure introducing variable queuing delay. By contrast, Gemma 4 MoE’s p95/p50 ratio is 1.13x at L2, reflecting dedicated local inference with no queuing.

For time-critical triage (L2), local inference eliminates the tail latency risk entirely. For deeper analysis (L3/L4), the additional latency of API backends may be acceptable given the lower call volume (165 L3 calls vs 1,440 L2 calls per 24 hours).

Cost-Performance Pareto Frontier

Three backends sit on the Pareto frontier (no other backend is both cheaper and more accurate):

- Gemma 4 MoE ($0, 0.5 GPU-hours): best latency, competitive L2 accuracy, moderate L3/L4

- Qwen 3.5 397B ($2.36): best L3 and L4 accuracy, moderate latency

- Claude Sonnet 4 ($8.08): second-best L3 and L4 accuracy, moderate latency

GPT-4o-mini ($0.32) sits near the frontier for cost-sensitive deployments willing to accept lower L4 quality (48.9%).

GPT-5.4 ($32.29) and Claude Opus 4 ($40.00) are strictly dominated: more expensive than Sonnet and Qwen with equal or worse accuracy.

Limitations

Synthetic data. The cluster simulation, while detailed (30+ metrics, realistic baselines, seasonal patterns), is not real telemetry. Real cluster data contains noise patterns, correlated failures, and operational artifacts that synthetic generation may not capture.

Checklist scoring. Keyword matching can miss correct reasoning expressed in unexpected phrasing and can match keywords in incorrect contexts. The broad regex patterns mitigate but do not eliminate this.

Single run per backend. Each backend was tested once. Run-to-run variance (from API-side caching, load variation, and model temperature) is not captured.

Conclusion

Context Window Is Not One Size Fits All

The data contradicts the assumption that a frontier model with a million-token context window is the optimal choice across all operational tasks. The relationship between model capability and task performance inverts depending on the complexity of the input context.

At Layer 2 and Layer 3, where the input context is small (890 tokens average for L2, 2,724 tokens for L3) and the task is focused pattern recognition, frontier models underperform or match models a fraction of their size and cost. GPT-5.4 scored 56.3% at L2 and 40.8% at L3. Gemma 4 MoE (26B parameters, local, free) scored 60.4% and 52.6% on the same tasks. The frontier model’s architecture, optimized for long-context reasoning, multi-turn dialogue, and complex instruction following, appears to work against it when the task is a straightforward classification or a focused root cause analysis with a small evidence window. These models are built for major quests. Small-context operational triage is garden work.

At Layer 4, where the input context grows to 4,379 tokens of synthesized cluster state and the task requires cross-incident reasoning, trend identification, and structured reporting, the relationship flips. Qwen 3.5 397B achieves 80.7% accuracy, Claude Sonnet 4 reaches 78.1%, and Claude Opus 4 hits 67.5%. GPT-5.4 collapses to 27.2% (that’s the fact, single run). The models designed for depth and synthesis perform according to their design intent when given a task that actually demands depth and synthesis.

The Sub-Second Pipeline

The MoE architecture at Layer 2 produces a behavior analogous to a vector database operating with both dense and sparse indexing: the response is prompt, precise, and pipeline-grade. At 0.73 seconds average latency with a p95 of 0.81 seconds, the local MoE model functions as a real-time signal filter. It does not deliberate. It classifies, scopes, and routes.

Of the 1,440 events generated per day by the statistical detection layer, 88.5% (1,275 events) are resolved at Layer 2 in under one second. The remaining 11.5% (165 events) escalate to Layer 3 for root cause analysis. Using the local MoE for both layers, the complete triage-to-root-cause pipeline completes in 4.28 seconds (0.73s + 3.55s). Every anomaly event in the cluster, from detection to diagnosis, is processed in under five seconds.

That is not a benchmark metric. That is a mean time to response for autonomous anomaly classification across 8,000 GPUs. A human operator triaging the same event volume (one event every 60 seconds, 24 hours a day) would require continuous staffing with no capacity for the deeper analysis that the 165 escalated events demand.

The Economics of Tiered Architecture

The data supports a three-tier deployment where each layer uses the model architecture matched to its task profile:

| Layer | Model | Calls/Day | Tokens/Day | Latency | Accuracy | Cost/Day |

|---|---|---|---|---|---|---|

| L2 (Triage) | Gemma 4 MoE (local) | 1,440 | 1.4M in, 126K out | 0.73s | 60.4% | $0 |

| L3 (Root Cause) | Qwen 3.5 397B (API) | 165 | 451K in, 102K out | 17.34s | 61.9% | $0.50 |

| L4 (Shift Summary) | Qwen 3.5 397B (API) | 7 | 26K in, 12K out | 46.01s | 80.7% | $0.03 |

| Total | 1,612 | $0.53 |

The optimal tiered architecture costs $0.53 per day. Substituting Claude Opus 4 at Layer 4 for maximum synthesis quality (67.5% accuracy, which in this case Qwen outperforms) raises the total to $1.60 per day. Running Claude Opus 4 across all layers costs $40.00 per day: a 75x premium over the tiered approach, with worse L3 accuracy (52.3% vs 61.9%) and worse L4 accuracy (67.5% vs 80.7%).

The L4 layer consumes 38,170 tokens per day across 7 calls. At frontier model pricing, that is $0.03 (Qwen), $0.22 (Sonnet), or $1.10 (Opus) per day. The state-of-the-art shift synthesis, structured input to structured output with cross-incident reasoning, costs less than a single API call at L2 volume. The economic case for deploying a frontier model at L4 exclusively, rather than as a general-purpose backend, reduces the daily cost by two orders of magnitude while improving accuracy at every layer.

Operational Implications

A human operations team monitoring 8,000 GPUs processes alerts, investigates incidents, and writes shift handoffs. The tiered LLM architecture does not replace the team. It changes what the team spends time on.

At Layer 2, 1,275 events per day are classified as noise or transient in under one second. Without automated triage, each of those events would require a human operator to open a dashboard, check context, and decide whether to investigate. At 2 minutes per manual triage, that is 42.5 hours of human labor per day absorbed by a model running on a single GPU.

At Layer 3, 165 events per day receive root cause analysis with recommended actions. The human operator reviews a structured diagnosis rather than starting from raw telemetry. At Layer 4, the shift handoff report arrives pre-written, covering every incident, SLA status, and emerging risk from the preceding 4 hours.

The false positive burden, which in GPU fleet operations generates the majority of alert fatigue and response degradation, is absorbed entirely by the sub-second triage layer at zero marginal cost.

Reproducibility

The benchmark code, data generation pipeline, scoring methodology, and all raw results are available at github.com/sch0tten/gpu-fleet-aiops-bench under an open-source license.